The general vibes I see online is that the AI companies have not been doing particularly well in the reliability department. Both OpenAI and Anthropic publish reliability statistics on their status pages. Now, I’m not a fan of using the nines as a meaningful indicator of reliability, but since I don’t have access to any other signals about reliability for these two companies, they’ll have to do for the purposes of this blog post.

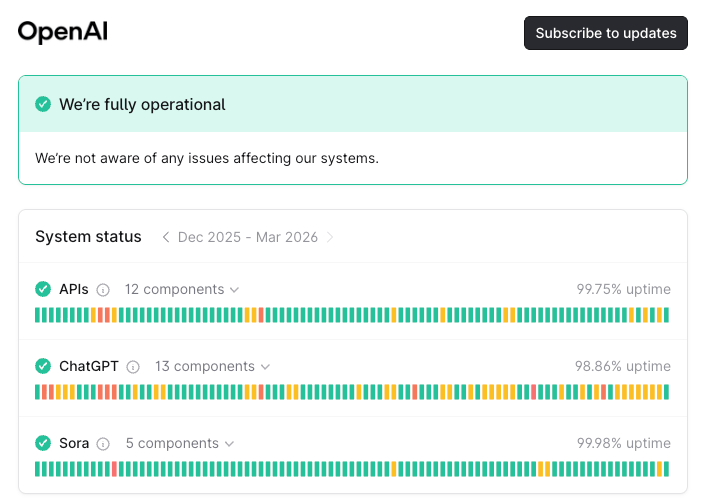

Here’s a screenshot of OpenAI’s status page:

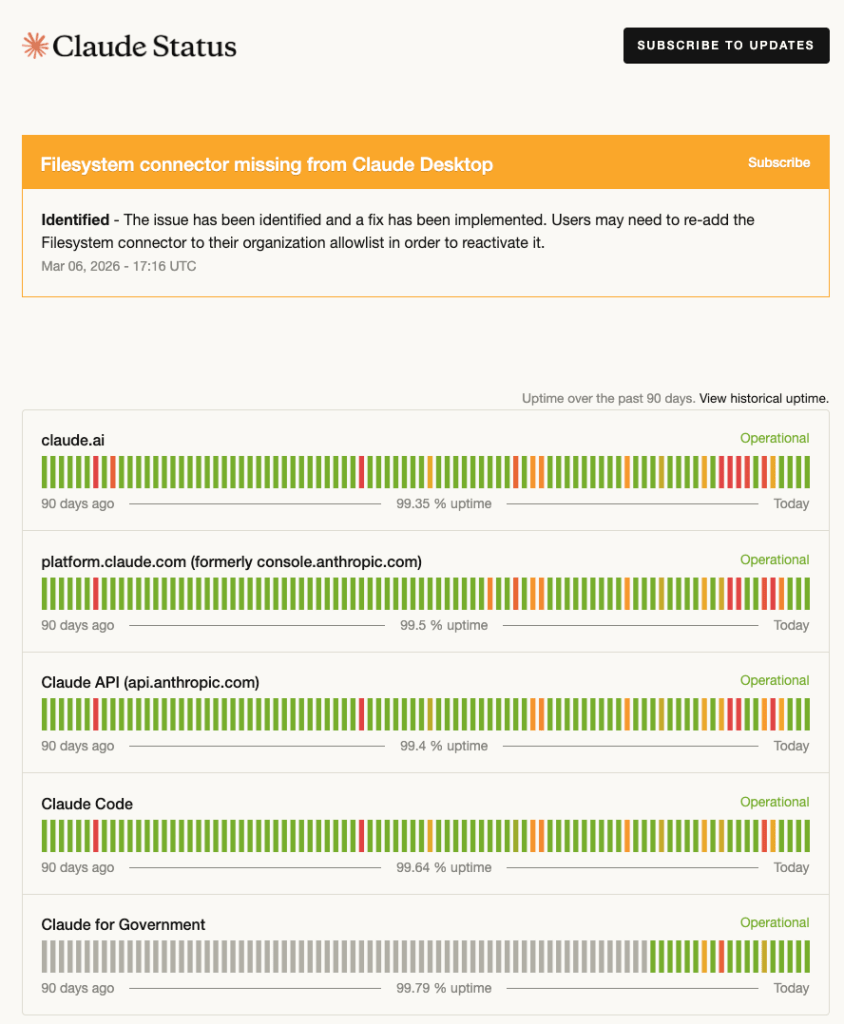

Here’s a screenshot of Anthropic’s status page:

And these numbers… well, they’re not great. With the exception of Sora, none of the services at either company makes it to 99.9% of reliability (three nines). Surprisingly, ChatGPT at 98.86% of uptime does not even make it to two nines.

I’ve seen speculation that the reason that reliability isn’t great is that this is a high development velocity phenomenon. Here’s Boris Cherny (the guy at Anthropic who wrote Claude Code) pushing back on that hypothesis.

A few days later, during a ChatGPT incident, I saw this post from Nik Pash at OpenAI:

This isn’t move fast and break things, but rather grow fast and overload things. These companies are in the business of providing LLMs, which are a new capability. Users are leveraging LLMs in new and innovative ways. The resilience engineering researcher David Woods refers to this phenomenon as a florescence to describe this kind of rapid and widespread uptake.

As a consequence of this florescence, the load on the providers increases unexpectedly and dramatically: they weren’t able to predict the load and have struggled to keep up with it when it happens. These LLM providers are running directly into the problem of saturation (plug: check out my recent post on saturation for the Resilience in Software Foundation).

Now, I expect that these companies will get better at recovering from these unexpected increases in load as they gain experience with the problem. Because of capacity constraints with those pricey GPUs, they can’t always scale their way out of these problem, but they can redistribute resources, and they can get better at load shedding and other sorts of graceful degradation to limit the damage of overload. And I bet that’s where they’re both investing in reliability today. At least, I hope so. Because this problem isn’t going to go away. If anything, I suspect their loads will become even more unpredictable as people continue to innovate with LLMs. Because AIs don’t seem to do any better at predicting the future than humans.