All other things being equal, what’s more expensive for your business: a fifteen-minute outage or an eight-hour outage? If you had to pick one, which would you pick? Hold that thought.

Imagine that you work for a company that provides a software service over the internet. A few days ago, your company experienced an incident where the service went down for about four hours. Executives at the company are pretty upset about what happened: “we want to make certain this never happens again” is a phrase you’ve heard several times.

The company held a post-incident review, and the review process identified a number of actions items to prevent a recurrence of the incident. Some of this follow-up work has already been completed, but there other items that are going to take your team a significant amount of time and effort. You already had a decent backlog of reliability work that you had been planning on knocking out this quarter, but this incident has put this other work onto the back burner.

One night, the Oracle of Delphi appears to you in a dream.

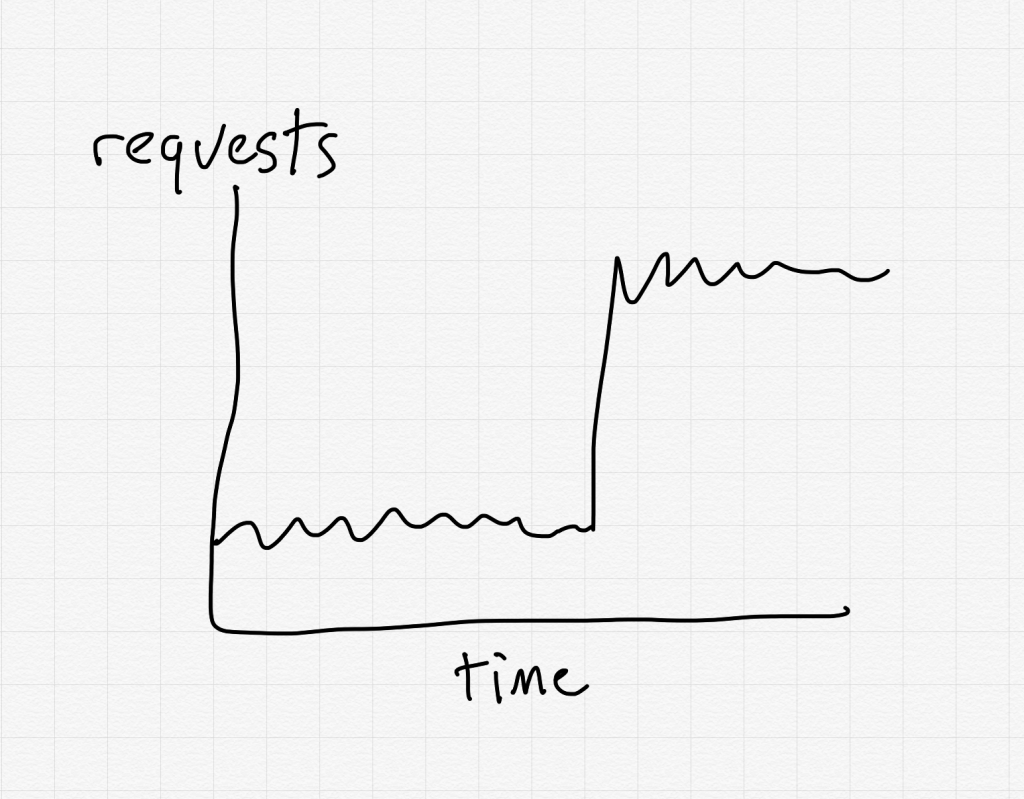

The Oracle tells you that if you prioritize the incident follow-up work, then in a month your system is going to suffer an even worse outage, one that is eight hours long. The failure mode for this outage will be very different from the last one. Ironically, one of the contributors to this outage will be an unintended change in system behavior that was triggered by the follow-up work. Another contributor to this incident was a known risk to the system that you were working on addressing, but that you had put off to the future after the incident changed priorities.

She goes on to tell you that if you instead do the reliability work that was on your backlog, you will avoid this outage. However, your system will instead experience a fifteen minute outage, with a failure mode that was very similar to the one you recently experienced. The impact will be much smaller because of the follow-up work that had already been completed, as well as the engineers now being more experienced with this type of failure.

Which path do you choose: the novel eight-hour outage, or the “it happened again!” fifteen minute outage?

By prioritizing doing preventative work from recent incidents, we are implicitly assuming that a recent incident is the one most likely to bite us again in the future. It’s important to remember that this is an illusion: we feel like the follow-up work is the most important thing we can do for reliability because we have a visceral sense of the incident we just went through. It’s much more real to us than a hypothetical, never-happened-before future incident. Unfortunately, we only have a finite amount of resources to spend on reliability work, and our memory of the recent incident does not mean that the follow-up work is the reliability work which will provide the highest return on investment.

In real life, we are never granted perfect information about the future consequences of our decisions. We have only our own judgment to guide us on how we should prioritize our work based on the known risks. Always prioritizing the action items from the last big incident is the easy path. The harder one is imagining the other types of incidents that might happen in the future, and recognizing that those might actually be worse than a recurrence. After all, you were surprised before. You’re going to be surprised again. That’s the real generalizable lesson of that last big incident.