Cloudflare consistently generates the highest quality public incident writeups of any tech company. Their latest is no exception: Cloudflare incident on November 14, 2024, resulting in lost logs.

I wanted to make some quick observations about how we see some common incident patterns here. All of the quotes are from the original Cloudflare post.

Saturation (overload)

In this case, a misconfiguration in one part of the system caused a cascading overload in another part of the system, which was itself misconfigured.

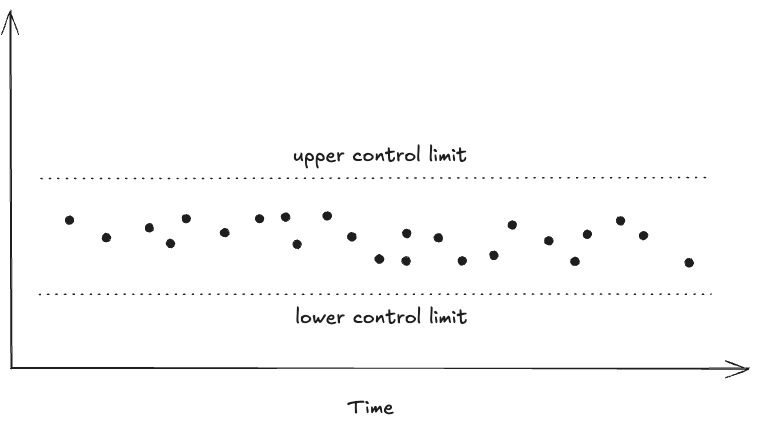

A very common failure mode in incidents is when the system reaches some limit, where it cannot keep up with the demands put upon it. The blog post uses the term overload, and often you hear the term resource exhaustion. Brendan Gregg uses the term saturation in his USE method for analyzing system performance.

A short temporary misconfiguration lasting just five minutes created a massive overload that took us several hours to fix and recover from.

The resilience engineering research David Woods uses the term saturation in a more general sense, to refer to a system being in a state where it can no longer meet the demands put upon it. The challenge of managing the risk of saturation is a key part of his theory of graceful extensibility.

It’s genuinely surprising how many incidents involve saturation, and how difficult it can be to recover when the system saturates.

This massive increase, resulting in roughly 40 times more buffers, is not something we’ve provisioned Buftee clusters to handle.

For other examples, see some of these other posts I’ve written:

- Quick takes on Rogers Network outage executive summary

- Slack’s Jan 2021 outage: a tale of saturation

- Uber’s adventures in the adaptive universe

When safety mechanism make things worse (Lorin’s law)

In a previous blog post entitled A conjecture on why reliable systems fail, I wrote:

Once a system reaches a certain level of reliability, most major incidents will involve:

- A manual intervention that was intended to mitigate a minor incident, or

- Unexpected behavior of a subsystem whose primary purpose was to improve reliability

In this case, it was a failsafe mechanism that enabled the saturation failure mode (emphasis in the original):

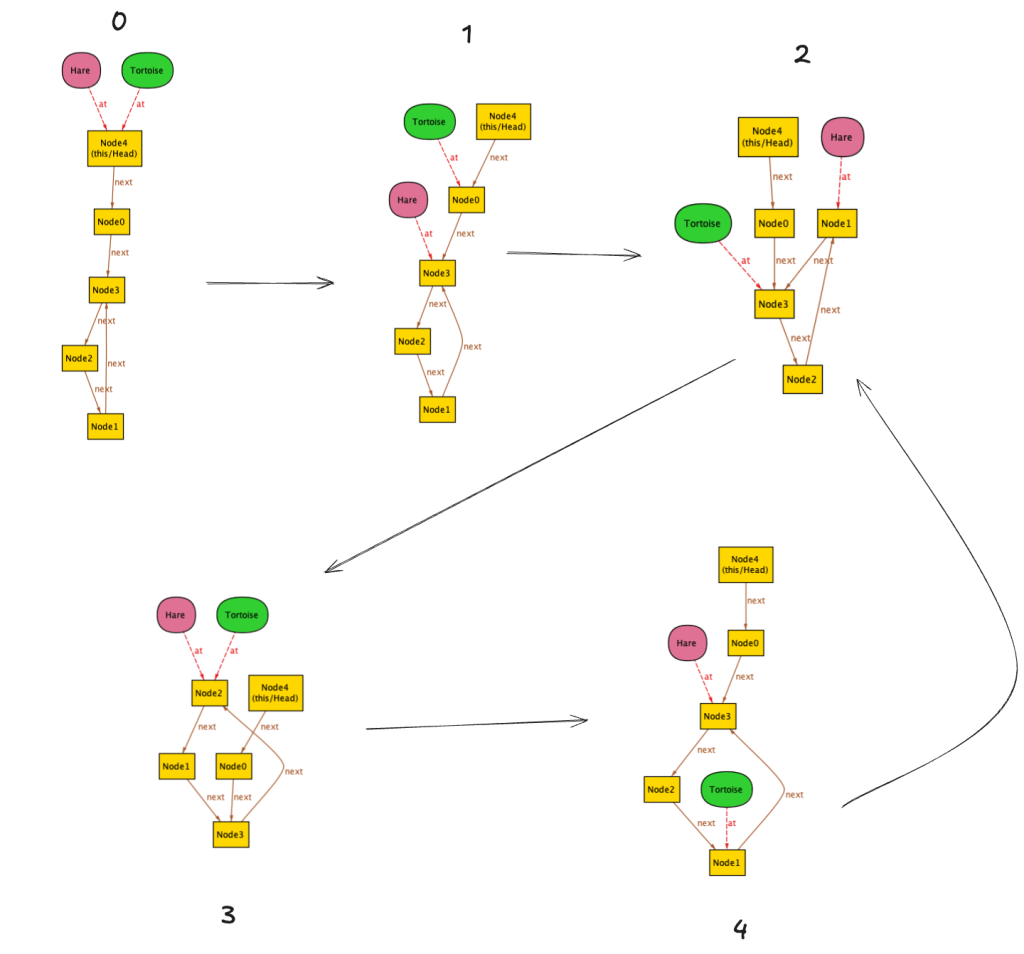

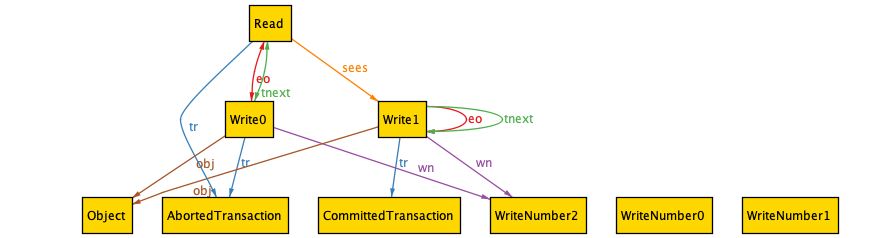

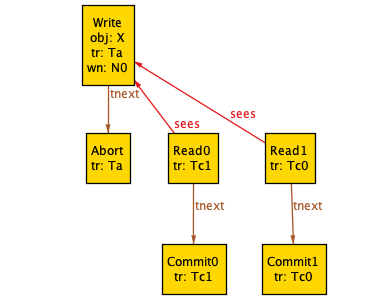

This bug essentially informed Logfwdr that no customers had logs configured to be pushed. The team quickly noticed the mistake and reverted the change in under five minutes.

Unfortunately, this first mistake triggered a second, latent bug in Logfwdr itself. A failsafe introduced in the early days of this feature, when traffic was much lower, was configured to “fail open”. This failsafe was designed to protect against a situation when this specific Logfwdr configuration was unavailable (as in this case) by transmitting events for all customers instead of just those who had configured a Logpush job. This was intended to prevent the loss of logs at the expense of sending more logs than strictly necessary when individual hosts were prevented from getting the configuration due to intermittent networking errors, for example.

Automated safety mechanisms themselves add complexity, and we are no better at implementing bug-free safety code than we are at implementing bug-free feature code. The difference is that when safety mechanisms go awry, they tend to be much more difficult to deal with, as we saw here.

I’m not opposed to automatic safety mechanisms! For example, I’m a big fan of autoscalers, which are an example of an automated safety mechanism. But it’s important to be aware of there’s a tradeoff: they prevent simpler incidents but enable new, complex incidents. The lesson I take away is that we need to get good at dealing with complex incidents where these safety mechanisms will inevitably contribute to the problem.

Complex interactions (multiple contributing factors)

Unfortunately, this first mistake triggered a second, latent bug in Logfwdr itself.

(Emphasis mine)

I am a card-carrying member of the “no root cause” club: I believe that all complex systems failures result from the interaction of multiple contributors that all had to be present for the incident to occur and to be as severe as it was.

When this failsafe was first introduced, the potential list of customers was smaller than it is today.

In this case, we see the interaction of multiple bugs

Even given this massive overload, our systems would have continued to send logs if not for one additional problem. Remember that Buftee creates a separate buffer for each customer with their logs to be pushed. When Logfwdr began to send event logs for all customers, Buftee began to create buffers for each one as those logs arrived, and each buffer requires resources as well as the bookkeeping to maintain them. This massive increase, resulting in roughly 40 times more buffers, is not something we’ve provisioned Buftee clusters to handle.

(Emphasis mine)

A huge increase in the number of buffers is a failure mode that we had predicted, and had put mechanisms in Buftee to prevent this failure from cascading. Our failure in this case was that we had not configured these mechanisms. Had they been configured correctly, Buftee would not have been overwhelmed.

The two issues that the authors explicitly call out in the (sigh) root causes section are:

- A bug that resulted in a blank configuration being provided to Logfwdr

- Incorrect Buftee configuration for preventing failure cascades

However, these are also factors that enabled the incident.

- The presence of failsafe (fail open) behavior

- The increase in size of the potential list of customers over time

- Buftee implementation that creates a separate buffer for each customer with logs to be pushed

- The amount of load that Buftee was provisioned to handle

I’ve written about the problems with the idea of root cause several times in the past, including:

- The problem with a root cause is that it explains too much

- Why incidents can’t be monocausal

- Root cause of failure, root cause of success

Keep an eye out for those patterns!

In your own organization, keep an eye out for patterns like saturation, when safety mechanisms make things worse, and complex interactions. They’re easy to miss if you aren’t explicitly looking for them.