I’ve been on a bit of a control systems kick lately, and, serendipitously, I happened to see this tweet, which referenced a paper by Henry Yin at Duke University titled The crisis in neuroscience.

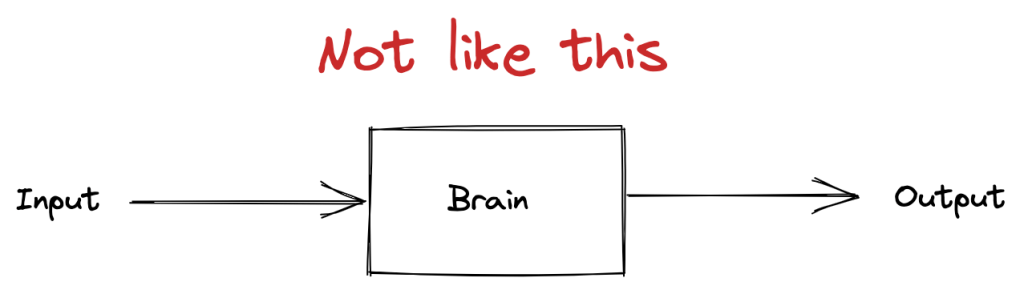

In the paper, Yin argues that neuroscience has failed to make progress in modeling human behavior because it tries to model the brain as a linear system, where you can study it by generating inputs and observing outputs.

Yin proposes an alternative model, that you need to view the brain as composed of a collection of hierarchical, closed-loop control systems in order to understand behavior from a neurological perspective.

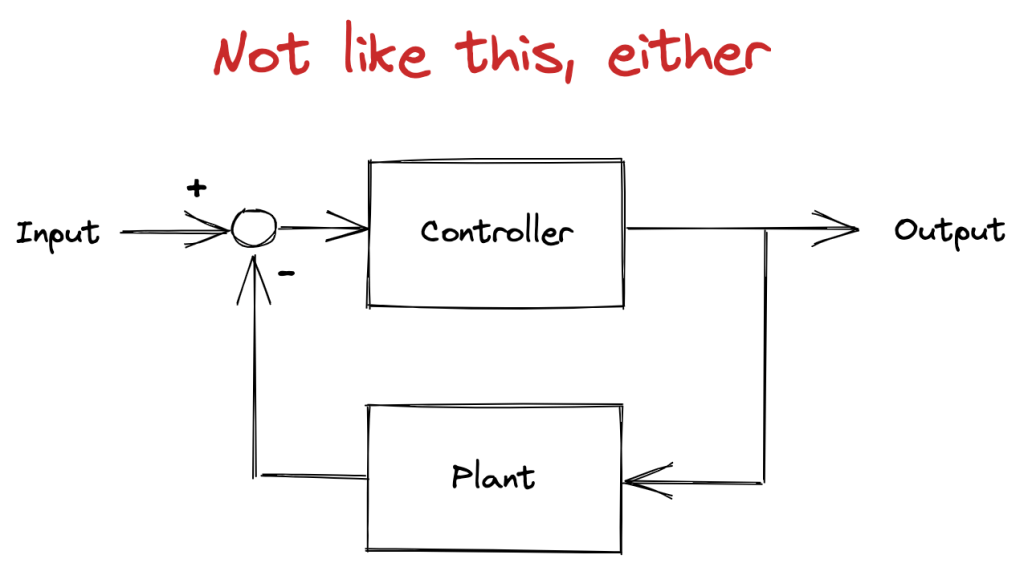

Now, the cybernetics folks have long argued that you should model human brains as control systems. But Yin argues that the cyberneticists got an important thing wrong in their control models: their models were too close to engineering applications to be directly applicable to organisms.

In an engineered control system, a human operator specifies the set point. For example, for a cruise control system, you’d set desired speed. In the block diagram above, this set point is provided as the input to the system.

The output of the “Plant” block diagram is the current state of the variable you’re trying to control (e.g., current speed). The controller takes as input the difference between the set point and current state, and uses that to determine how to drive the plant (e.g., input to the motor).

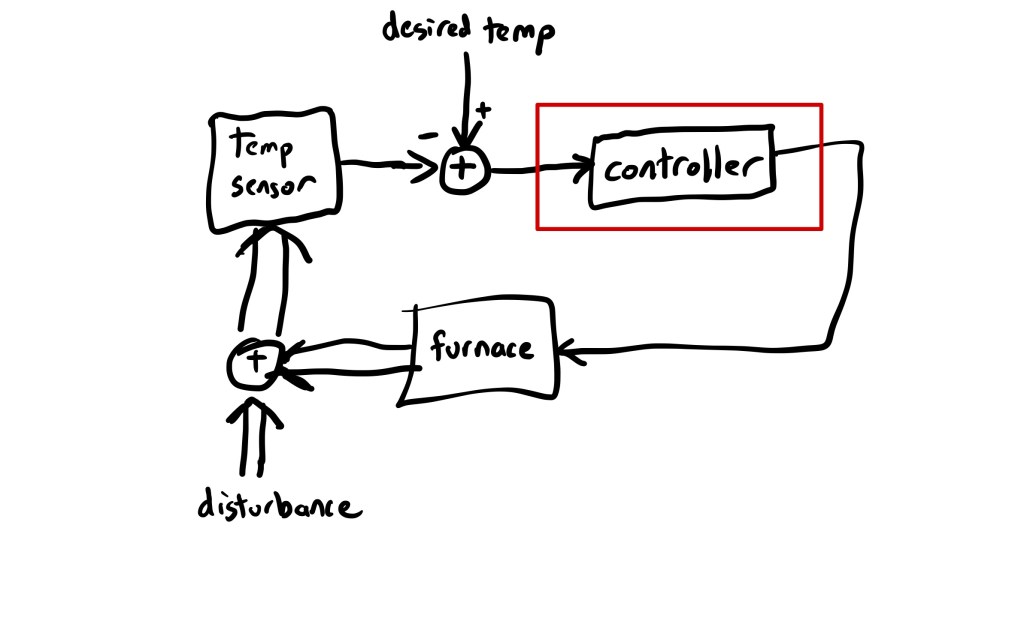

Here’s a block diagram of everyone’s favorite control systems example, the thermostat:

I’ve used a double-arrow to indicate signals that propagate through the environment, and single-arrows to indicate signals that propagate through wires. I’ve put a red box around where Yin claims the cyberneticists hold as their model for control in animals.

The variable under control is the temperature. A human sets the desired temperature, and a temperature sensor reads the current temperature. The controller takes as input the difference between the desired temperature and the current temperature, and uses that to determine whether or not to turn on the furnace.

The actual temperature in the house is determined both by the output of the furnace, and by other factors (e.g., temperature outside, how good the insulation is, whether someone has opened a door), which I’ve labeled disturbance.

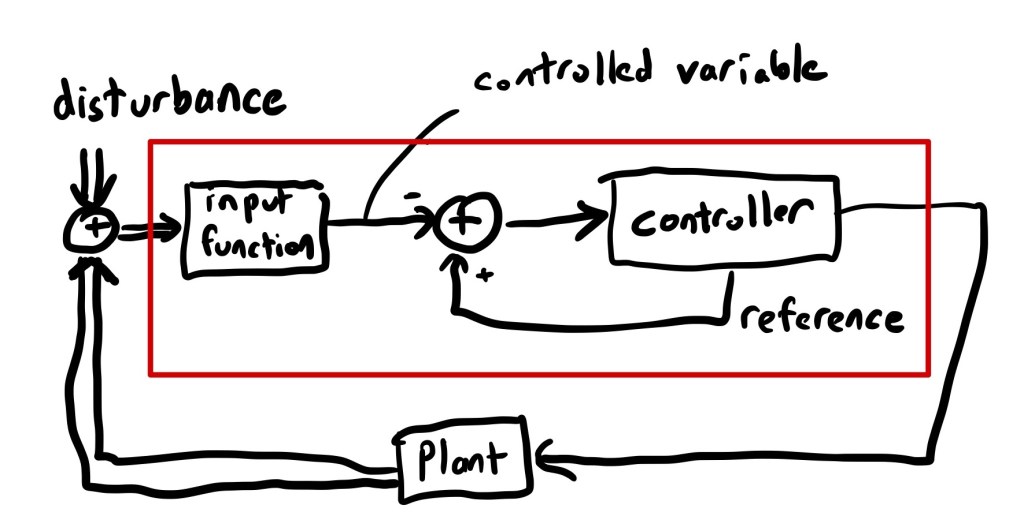

The problem with this, Yin argues, is that the red box is not a good model for the control that happens in the brain. As an alternative, he proposes the following model:

You can think of the red box as being the stuff inside some aspect of the brain, the “plant” as being the things that this aspect controls (e.g., other parts of the brain, muscles).

The difference in Yin’s model is that the controller determines the set point. There’s no external agent specifying the desired value as an input. Instead, the controller generates its own set point, which Yin calls the reference value.

Also note that Yin’s model includes the input function inside the red box. This takes sensory input at calculates the variable that’s under control. The difference between this model and the thermostat is that, in the thermostat model, you know from the outside that temperature is the variable being controlled. In Yin’s model, you can’t see from the outside what the variable is that’s being controlled for: the variable is internal to the control system.

Despite knowing nothing about neuroscience, and only knowing a bit about control systems, I still found this paper surprisingly accessible. I recommend it. There’s a lot more here than what I’ve touched on in this post.