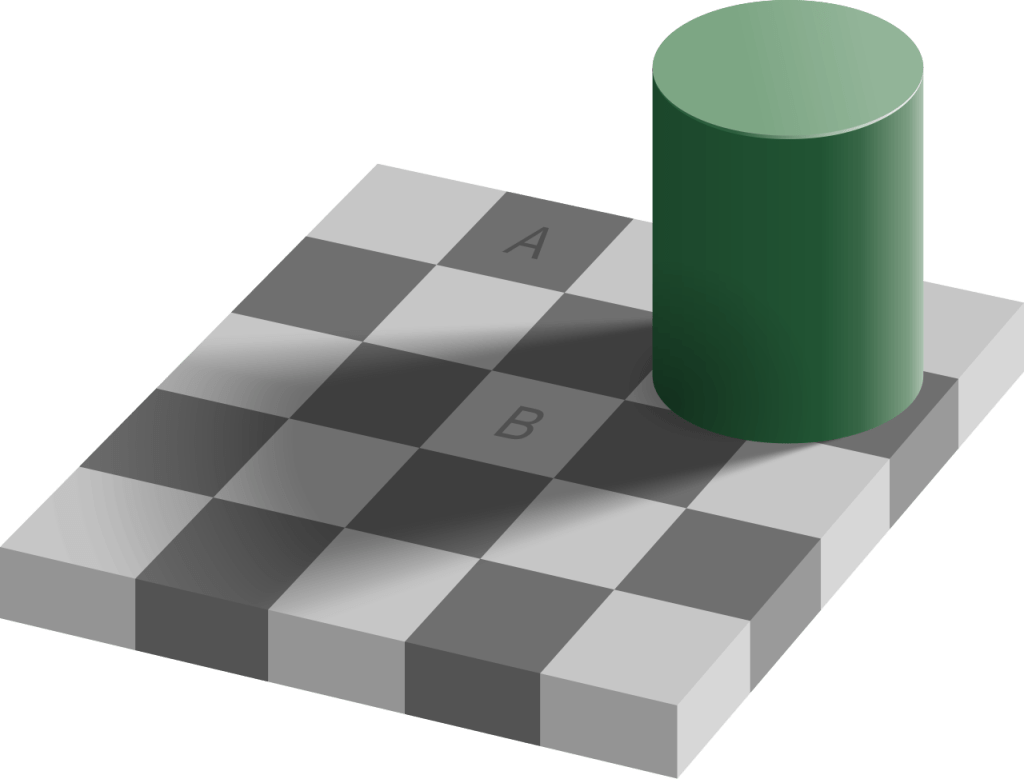

Here’s a famous optical illusion, which was developed by the American neuroscientist Edward H. Adelson.

Even though square A appears darker than square B, the two are, in fact, the exact same shade of gray. It’s such a powerful illusion that, even knowing the illusion doesn’t destroy its effect; you’ll still “see” the illusion after you know about it. It’s so powerful that you may not believe me over your lying eyes. If you’re on macOS, you can confirm the illusion by opening the Digital Color Meter app and hovering your mouse pointer over each square in turn. You’ll see that both squares have the same RGB value. In hex, the value is #646464.

I’m going to suggest two stylized reactions to witnessing this illusion. One reaction is to say, “Oh, no! This illusion clearly illustrates a flaw in the human visual system! We should work on developing a vision correction technology so that people don’t fall victim to problems that would arise from this failure mode in human visual processing.”

A very different reaction is to say, “Oh, wow! This illusion gives us a hint into how the human visual system functions! Our brain must contain a prior model about the relationship between light, shadow, and objects, and is imposing that model when processing the signals coming from our optic nerve. This illusion appears to be an example of a pathological case which violates the human brain’s model.”

The first reaction is, admittedly, a ridiculous strawman. These sorts of illusions are harmless, so there’s no motivation to try to “correct” from them. After all, it’s no coincidence that the illusion was developed by a researcher who studies human vision. Even though our visual system is failing us in this strange case, the value of an illusion like this is not to learn the circumstances in which our vision fails, but instead to use the failure to gain insight into how our vision works so effectively for the vast majority of the time.

Last week, I wrote a post about Safety-II, the idea that we will learn more about how to create reliability in our system by studying the (common) successful cases rather than the (rare) failure cases. But we can also use the failure cases to learn about how the system normally succeeds! Just as neuroscientists can use optical illusions (where the vision system fails) to learn how the visual system succeeds, we can use incidents (when our system fails) to learn about how our system succeeds.

To make this more concrete, imagine you’re in an incident review meeting, and one of the incident responders, someone who is a real expert at your company, is talking about how, in hindsight, they misdiagnosed the problem during the incident. The signals that they saw misled them until thinking that the system was in state A, when really the system was in state B. And that led to the incident taking much longer to resolve, because the responders went down the wrong path.

The typical sort of question to ask in a review meeting would be along the lines of “what can we do to make sure we don’t misdiagnose this type of problem in the future?” But, there’s a very different question that you ask. And that question is, “how did the responder come to the conclusion the system was in state A?” Asking this question will expose details about the responder’s mental model of how the system actually works. If the responder was an expert, and they were led astray by the signals, then it’s likely that this incident was a pathological case, an operational equivalent of the optical illusion we saw above. By asking the responder about how they made the diagnosis, you are giving the meeting attendees the opportunity to learn from the expert responder. Similarly, you can ask the responder, “how did you finally figure out that the system was in state B?”, which will give you another chance to retroactively witness the work of an expert in action.

Like optical illusions, incidents are pathological cases. But, unlike illusion, incidents aren’t harmless. This means that the natural reaction is, “what went wrong here, and how do we stop doing that?” But if our goal is improvement, we should recognize there’s a lot more leverage in maximizing the opportunity to learn about what’s working well today, from the experts who are doing that work well. After all, there’s a reason we called that responder an expert; their work had led to a lot more success than failure.