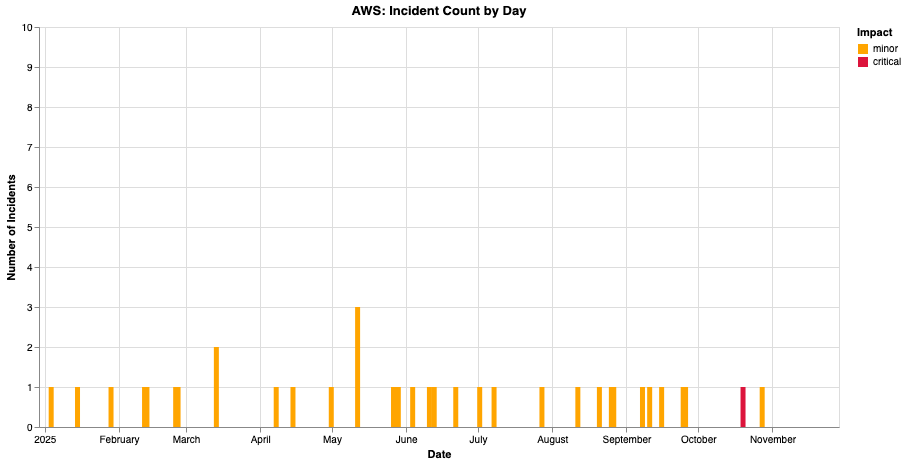

Like many companies, AWS has a defined process for reviewing incidents. They call their process Correction of Error. For example, there’s a page on Correction of Error in their Well-Architected Framework docs, and there’s an AWS blog entry titled Why you should develop a correction of error (COE).

In my previous blog post on the AWS re:Invent talk about the us-east-1 incident that happened back in October, I wrote the following:

Finally, I still grit my teeth whenever I hear the Amazonian term for their post-incident review process: Correction of Error.

On LinkedIn, Michael Fisher asked me: “Why does the name Correction of Error make you grit your teeth?” Rather than just reply in a LinkedIn comment, I thought it would be better if I wrote a blog post instead. Nothing in this post will be new to regular readers of this blog.

I hate the term “Correction of Error” because it implies that incidents occur as a result of errors. As a consequence, it suggests that the function of a post-incident review process is to identify the errors that occurred and to fix them. I think this view of incidents is wrong, and dangerously so: It limits the benefits we can get out of an incident review process.

Now, I will agree that, in just about every incident that happens, during the post-incident review work, you can identify errors that happened. This almost always involves identifying one or more bugs in code (I’ll call these software defects here). You can also identify process errors: things that people did that, in hindsight, we can recognize as having contributed to an incident. (As an aside, see Scott Snook’s book Friendly Fire: The Accidental Shootdown of U.S. Black Hawks over Northern Iraq for a case study of an incident where there were no such errors even identified. But I’ll still assume here that we can always identify errors after an incident).

So, if I agree that there are always errors involved in incidents, what’s my problem with “Correction of Error”? In short, my problem with this view is that it fundamentally misunderstands the nature the role of both software defects and human work in incidents.

More software defects than you can count

Wall Street indexes predicted nine out of the last five recessions! And its mistakes were beauties. – Paul Samuelson

Yes, you can always find software defects in the wake of an incident. The problem with attributing an incident to a software defect is that modern software systems running in production are absolutely riddled with defects. I cannot count the times I’ve read about an incident that involved a software defect that had been present for months or even years, and had gone completely undetected until conditions arose where it contributed to an outage. My claim is that there are many, many, many such defects in your system, that have yet to manifest as outages. Indeed, I think that most of this defects will never manifest as outages.

If your system is currently up (which I bet it is), and if your system currently has multiple undetected defects in it (which I also bet it does), then it cannot be the case that defects are a sufficient condition for incidents to occur. In other words, defects alone can’t explain incidents. Yes, they are part of the story of incidents, but only a part of it. By focusing solely on the defects, the errors, means that you won’t look at the systemic nature of system failures. You will not see how the existing defects interact with other behaviors in the system to enable the incident. You’re looking for errors, and unexpected interactions aren’t “errors”.

For more of my thoughts on this point, see my previous posts The problem with a root cause is that it explains too much, Component defects: RCA vs RE and Not causal chains, but interactions and adaptations.

On human work and correcting errors

Another concept that error invokes in my mind is what I called process error above. In my experience, this typically refers to either insufficient validation during development work that led to a defect making it into production, or an operational action that led to the system getting into a bad state (for example, a change to network configuration that results in services accidentally becoming inaccessible). In these cases, correction of error implies making a change to development or operational processes in order to prevent similar errors happening in the future. That sounds good, right? What’s the problem with looking at how the current process led to an error, and changing the process to prevent future errors?

There are two problems here. One problem is that there might not actually be any kind of “error” in the normal work processes, that these processes are actually successful in virtually every circumstance. Imagine if I asked, “let’s tally up the number of times the current process did not lead to an incident, versus the number of times that the current process did lead to an incident, and use that to score how effective the process is.” Correction of error implies to me that you’re looking to identify an error in the work process, it does not imply “let’s understand how the normal work actually gets done, and how it was a reasonable thing for people to typically work that way.” In fact, changing the process may actually increase the risk of future incidents! You could be adding constraints, which could potentially lead to new dangerous workarounds. What I want is a focus on understanding how the work normally gets done. Correction of error implies the focus is specifically on identifying the error and correcting it, not on understanding the nature of the work and how decisions made sense in the moment.

Now, sometimes people need to use workarounds in order to get their work done because there are constraints that are preventing them from doing the work the way they are supposed to do it, and the workaround is dangerous in some way, and that contributes to the incident. And this is an important insight to take away from an incident! But this type of workaround isn’t an error, it’s an adaptation. Correction of error to me implies changing work practices identified as erroneous. Changing work practices is typically done by adding constraints on the way work is done. And it’s these exact type of constraints that can increase risk!

Remember, errors happen every single day, but incidents don’t. Correction of error evokes the idea that incidents are caused by errors. But until you internalize that errors aren’t enough to explain incidents, you won’t understand how incidents actually happen in complex systems. And that lack of understanding will limit how much you can genuinely improve the reliability of your system.

Finally, I think that correcting errors is such a feeble goal for a post-incident process. We can get so much more out of post-incident work. Let’s set our sights higher.