I’ve been a bit of an anti-root-cause-analysis (RCA) tear lately. On LinkedIn, health and safety expert Vincent Theobald-Vega left a thoughtful, detailed comment on my last post. In his comment, he noted that RCA done poorly leads to bad results, and he pointed me to what he described as a good guide to using the RCA approach: Investigating accidents and incidents. This is a free book published by the UK Health and Safety Executive.

However, after reading this guide, my perception of RCA has not improved. I still believe that RCA is based on a fundamentally incorrect model of complex systems failure. To clarify my thinking, I’ve tried to explain where I see its flaws in this post.

A quick note on terminology: while the guide uses the term accident, I’m going to use the term incident instead, to remain consistent with the usage in the software domain. The guide uses incident to refer to near misses.

Some content in the guide that I appreciated

While I disagree with RCA as described in the guide, I wanted to start by pointing out areas of agreement I had with the guide.

Not just a single cause

The guide does note that there are multiple causes involved in incidents. It notes that adverse events have many causes (p6), and it also mentions that Very often, a number of chance occurrences and coincidences combine to create the circumstances in which an adverse event can happen. All these factors should be recorded here in chronological order, if possible. (p10).

While I disagree with the causal language, I do at least appreciate that it points out there are multiple factors.

Examine how work is really done

The guide does talk about examining the work and the context under which it takes place. Under “information and insights gained from an investigation”, one of the bullet points is A true snapshot of what really happens and how work is really done (p7).

Under the “Gathering detailed information: How and what? section, the guide asks What activities were being carried out at the time? and Was there anything unusual or different about the working conditions?” (p15)

“Human error” is not a valid conclusion

The guide is opposed to the idea of human error being identified as a cause. It notes that Investigations that conclude that operator error was the sole cause are rarely acceptable. Underpinning the ‘human error’ there will be a number of underlying causes that created the environment in which human errors were inevitable. (p10)

Examine your near misses

Finally, the guide does point out the value in investigating near misses, noting that While the argument for investigating accidents is fairly clear, the need to investigate

near misses and undesired circumstances may not be so obvious. However, investigating near misses and undesired circumstances is as useful, and very much easier than investigating accidents. (p8)

The RCA model of incidents

Here’s my attempt to sketch out a conceptual model of how incidents happened, according to the guide.

The guide distinguishes between three different types of causes:

- Immediate cause – the most obvious reason why an adverse event happens (p4)

- Underlying cause – the less obvious ‘system’ or ‘organisational’ reason for an adverse event happening (p5)

- Root cause – an initiating event or failing from which all other causes or failings spring. Root causes are generally management, planning or organisational failings (p5).

The idea is that there is a causal chain from root cause to underlying cause to immediate cause. A combination of these immediate causes, along with chance occurrences and coincidences, combine to enable the incident.

The guide uses the metaphor of a sequence of dominos to describe this causal chain, where the initial domino (labeled “A” in the diagram below) is a root cause, and the domino labeled “B” an immediate cause.

If left unaddressed, these root causes will lead to multiple incidents in the future. Hence, the goal of an RCA is to identify and eliminate the root causes in order to prevent recurrence of the incident:

The same accidents happen again and again, causing suffering and distress to an ever-widening circle of workers and their families… The findings of the investigation will form the basis of an action plan to prevent the accident or incident from happening again… (p4, emphasis mine)

To get rid of weeds you must dig up the root. If you only cut off the foliage, the weed will grow again. Similarly it is only by carrying out investigations which identify root causes that organisations can learn from their past failures and prevent future failures.(p9, emphasis mine)

The RE model of incidents

My claim is that the RCA model of incidents is dangerously incorrect about the nature of failure in complex systems. More importantly, these flaws in the RCA model lead to sub-optimal outcomes for incident investigations. In other words, we can do a lot better than RCA if we have a different model about how incidents happen.

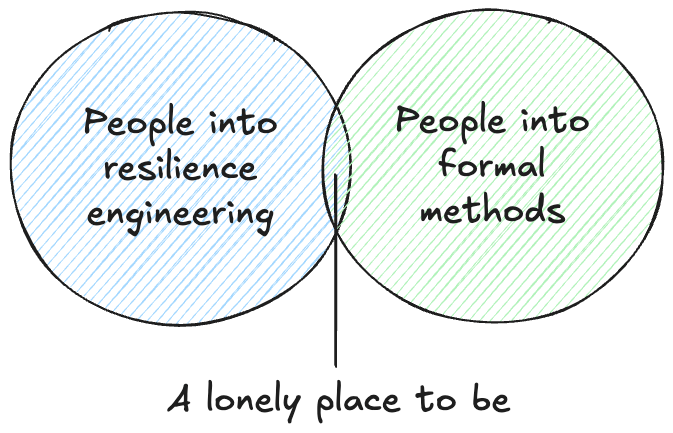

The best way to illustrate this is to describe an alternative model that I believe more accurately models complex systems failures, and results in better investigation outcomes. I’m going to call it the resilience engineering (RE) model in this blog post, partly to encourage folks to explore the research field of resilience engineering, and partly as a way to encourage folks to check out the Resilience in Software Foundation. But you may have heard terms associated with this model, such as the New Look, the New View, Safety-II, and Learning from Incidents (LFI). My favorite summary of the RE model is Richard Cook’s very short paper How Complex Systems Fail.

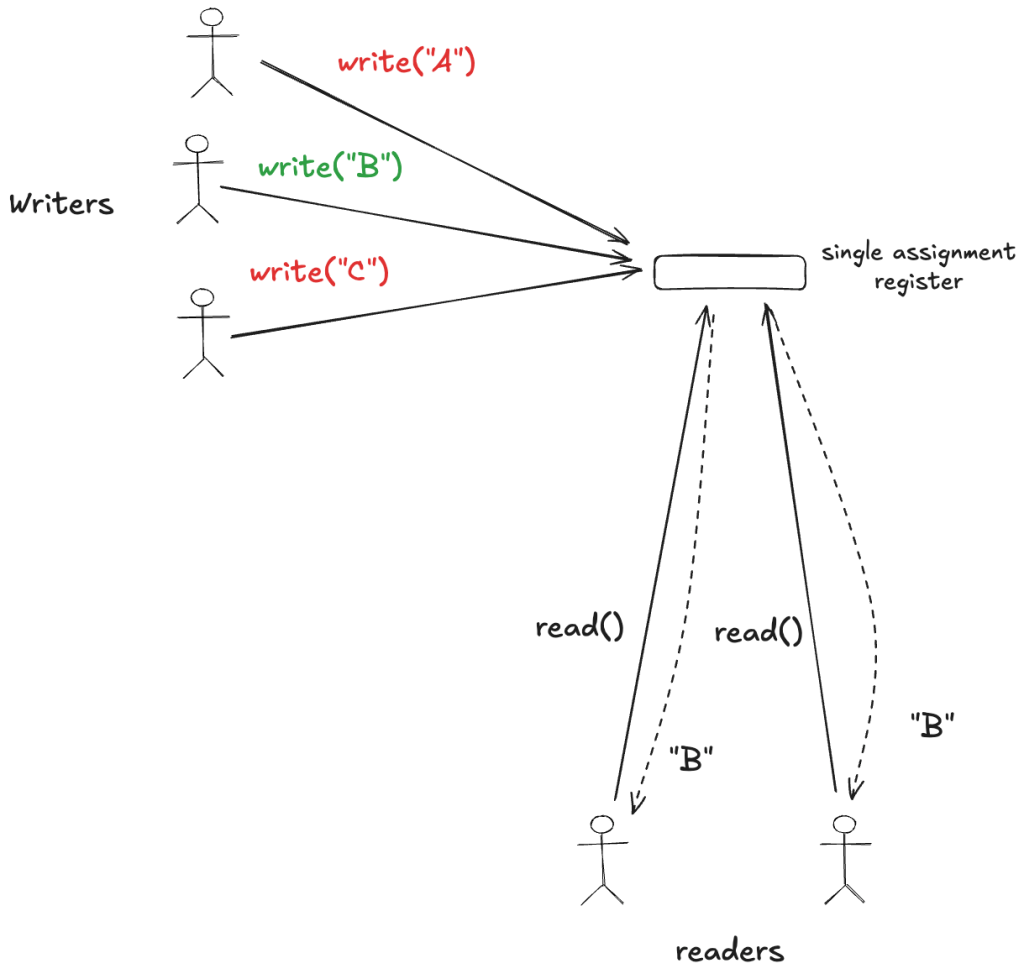

Not causes but interactions

Where RCA treats causes as the first class entities of an incident, RE instead treats interactions as the first-class entity. It is the unexpected interactions of the different components in a complex system that enables the incident to occur.

Note that there’s no causal chain in this model. Instead, it’s an ever-branching web of contributing factors, which each factor is itself is influenced potentially influenced by other factors, and so on. I like how John Allspaw uses the expression the infinite hows to draw a contrast to the causal chain view of five whys. I once proposed the metaphor of the Gamma knife as a way to imagine how these interactions come together to enable an incident.

Labeling the behavior of the individual components as causes is dangerous because it obscures the fact that the problem was not the individual components themselves but that separate subsystems interacted in ways that were unpredictable and harmful. Modern software systems are essentially control systems with multiple feedback loops, and it’s effectively impossible for humans to predict how these loops are going to interact with each other and with the range of possible inputs we might throw at them. You don’t have to look any further than Kubernetes to understand both the value and the surprising behavior of feedback systems.

Under the RE model, incidents are perfect storms of complex interactions across multiple components under a particular set of circumstances. Even though this incident revealed a dangerous interaction between components A and B, the next incident may be an interaction between components D and E, and the D-E interaction may be even more likely to occur than the A-B one to re-occur.

In addition, changing the behavior of components A or B might enable new failure modes by creating the opportunity for new unexpected interactions with other components, even though it has prevented the A-B interaction.

Adaptations to compensate for existing faults

Here’s a different picture. Imagine your system as a collection of components, which I’ve denoted here as rounded boxes. To keep things simple, I’m not going to show the interactions

Now, imagine that you experience an incident, you do an RCA, and you identify as the underlying causes that two of the components behaved incorrectly in some way. There was a fault in those components that wasn’t noticed before the incident.

The RCA model would look for the root cause of these faults, perhaps a problem in the way that these components were validated. For example, perhaps there was a certain type of testing that wasn’t done, and that’s how the problem went undetected. As a result, not only would these two components be fixed, but we would also have improved the process by which we verify components, meaning fewer component problems in the future.

Now, let’s look at the RE model. This model tells us that there are what Cook calls latent failures that are distributed throughout the system: they’re there, but we don’t know where they are. Sometimes these latent failures are referred to as faults.

Despite the presence of all of these faults in our complex system, the system actually functions most of the time. Cook describes this by observing that complex systems are heavily and successfully defended against failure and complex systems run in degraded mode. Even though your system is riddled with faults, it still functions well enough to be useful, although it never functions perfectly.

This is actually one of the secrets of services that seem reliable to their end users. It’s not that they never encounter problems, it’s that they are able to compensate for those problems in order to keep working correctly. In the RE model, successful complex systems are always fault-tolerant, because they need to be in order to succeed.

Because there are so many latent failures, and they change over time, the RCA approach (find a root cause, and root it out) doesn’t work under the RE model to generate continuous improvement. Because an incident was due to a random combination of multiple latent failures, and because there are so many of these failures, simply eliminating the recurrence of a specific combination doesn’t buy you much: the future incidents are very likely to be different because they’ll involve novel combinations of latent failures that you don’t see.

In contrast, the RE approach emphasizes the idea of identifying how your system adapts to succeed in the presence of all these faults. The desired outcomes of this approach are to increase your ability to continue to adapting to faults in the future, as well as to find areas in your system where you are less able to adapt effectively. It means understanding that your system is fault tolerant, and using incidents to understand how the people in your system are able to adapt to deal with faults.

This also includes understanding how those adaptations can fail to keep the system running. Because, when an incident happened, those adaptations weren’t sufficient. But there’s a huge difference between “this process led to a fault and so it needs to be changed” (RCA) and “the way we normally work is typically effective at working around problem X, but it didn’t work in these particular circumstances because Y and Z and …”

The RCA approach is about finding the generators of faults in your organization and removing them. The RE approach is about finding the sources of fault tolerance in your organization so you can nurture and grow them. The RE folks call this adaptive capacity. Remember, your system contains a multitude of undiscovered faults, and those faults will ultimately result in surprising incidents, no matter how many root causes you identify and eliminate. Consider trying the RE approach. After all, you’re going to need all of the fault tolerance you can get.