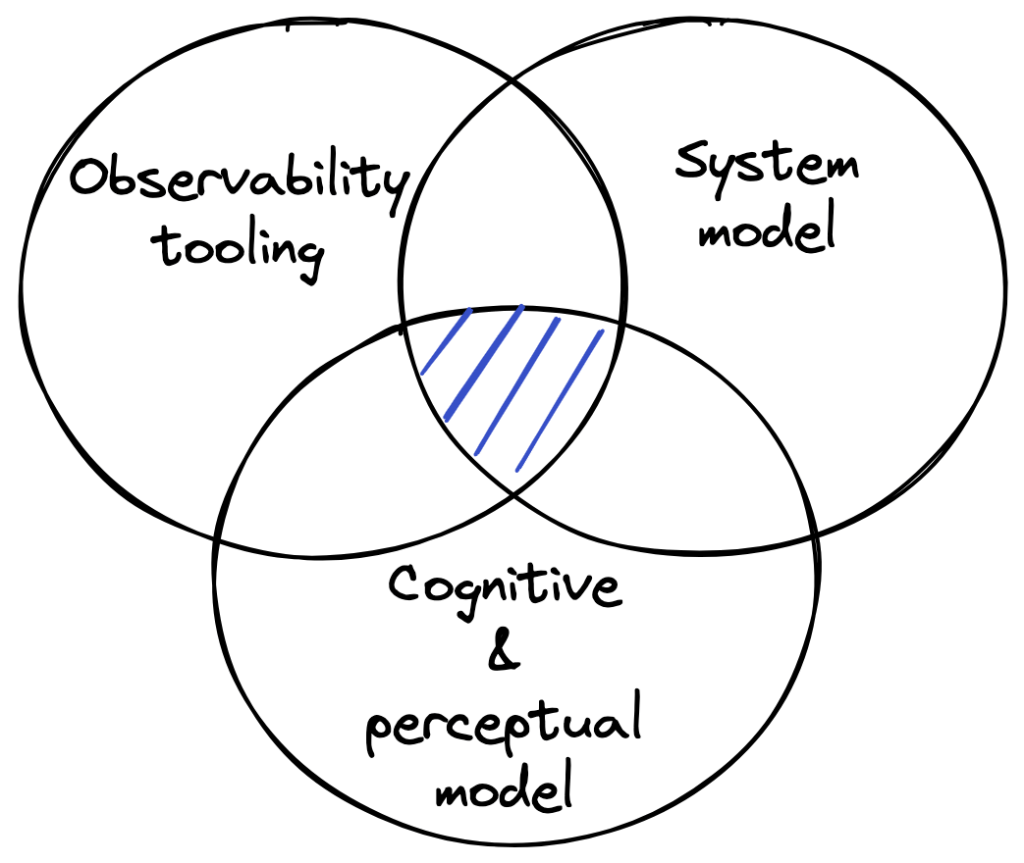

An effective observability solution is one that helps an operator quickly answer the question “Why is my system behaving this way?” This is a difficult problem to solve in the general case because it requires the successful combination of three very different things.

Most talk about observability is around vendor tooling. I’m using observability tooling here to refer to the software that you use for collecting, querying, and visualizing signals. There are a lot of observability vendors these days (for example, check out Gartner’s APM and Observability category). But the tooling is only one part of the story: at best, it can provide you with a platform for building an observability solution. The quality of that solution is going to depend on how good your system model is and your cognitive & perceptual model is that it’s encoded in your solution.

A good observability solution will have embedded within it a hierarchical model of your system. This means it needs to provide information about what the system is supposed to do (functional purpose), the subsystems that make up the system, all of the way down to the low-level details of the components (specific lines of code, CPU utilization on the boxes, etc).

As an example, imagine your org has built a custom CI/CD system that you want to observe. Your observability solution should give you feedback about whether the system is actually performing its intended functions: Is it building artifacts and publishing them to the artifact repository? Is it promoting them across environments? Is it running verifications against them?

Now, imagine that there’s a problem with the verifications: it’s starting them but isn’t detecting that they’re complete. The observability solution should enable you to move from that function (verifications) to the subsystem that implements it. That subsystem is likely to include both application logic and external components (e.g., Temporal, MySQL). Your observability solution should have encoded within it the relationships between the functional aspects and the relevant implementations to support the work of moving up and down the abstraction hierarchy while doing the diagnostic work. Building this model can’t be outsourced to a vendor: ultimately, only the engineers who are familiar with the implementation details of the system can create this sort of hierarchical model.

Good observability tooling and a good system model alone aren’t enough. You need to understand how operators do this sort of diagnostic work in order to build an interface that supports this sort of work well. That means you need a cognitive model of the operator to understand how the work gets done. You also need a perceptual model of how it is that humans effectively navigate through the world so you can leverage this model to build an interface that will enable operators to move fluidly across data sources from different systems. You need to build an operational solution with high visual momentum to avoid an operator needing to navigate through too many displays.

Every observability solution has an implicit system model and an implicit cognitive & perceptual model. However, while work on the abstraction hierarchy dates back to the 1980s, and visual momentum goes back to the 1990s, I’ve never seen this work, or the field of cognitive systems engineering in general, explicitly referenced. This work remains largely unknown in the tech world.